You never stop to think that sending your kids to school can be a problem, but it can be. Kids are bullies, but teachers are too! Research demonstrates that abuse of all forms hurts us and our societies bad. Isn't it time we stopped hurting our children, and ourselves?

Read More »Surveys

Featured Articles

-

Gender and Sexual Violence

-

The American Nightmare

-

The Problem of Child Labor in US Agriculture

-

What is Religion?

-

If Society is the Disease, is Cannabis the Cure?

-

Care Bears vs. Transformers: Gender Stereotypes in Advertisements

-

A Fresh Perspective on Knowledge Sharing: The EJS Uprising

-

Sociology in High School: Preparing Minds for Tomorrow

Addressing the Academy

-

The Rarified World of Max Weber’s Honoratioren and the Voting Cows

Max Weber, one of this planet's most famous sociologists, was not a fan of modern society. Max Weber said that we are all stuck in an "iron cage of capitalism" and that this cage would slowly squeeze the very soul out of our skin. In a world of pervasive Prozac and ubiquitous mental angst, is the sociologist's prognostication really that far from reality? In this article Tony Waters explores some of the details of the soul crush we all experience in the corralled world of Bos primigenius academicus.

Read More » -

The Business of Higher Education

-

Bill Gates is an Idiot: A Recipe for Educational Failure

-

The University, Accountability, and Market Discipline in the Late 1990s

-

IMPACT! Academics, Citation, and Scholarly Self Delusion

-

Ward Churchill, the AAUP, and Academic Freedom

-

Fatuous, Naïve, and Bold at the Same Time: Welcome to the Wonderful World of Peer Review

Classroom Controversy

-

Ding Dong the Alpha Male is Dead

The scientist who coined the term "alpha male" has recanted! There's actually no such thing, he says. Could it be nothing more than ideological self-delusion?

Read More » -

A Bungling Fox in the Henhouse: The Corporatization of Higher Education

-

Sociology versus Psychology – The Social Context of Psychological Pathology and Child Abuse

-

False Gods and Monsters: The Terrible Costs of the Joe Paterno Cult at Penn State

-

Athletes and the ‘Club’: Nothing Good Ever Happens After Midnight

-

America Is Under Attack??

Current Events

Falling dominoes

What's going on with the world? It has been only eight years since the last global financial crises, but here we go again. They (the crises) are coming faster and faster. It's not going to be too long before recovery is no longer possible. Time to wake up and smell the coffee, or go down with the sinking ship. If you want to write my essay for me on this topic, you can ask experts in this field for help.

Read More »The Greek Financial Crises – What is Money

What is money? And why is debt such a problem? The answers lie within. Learners at the Finance Department frequently encounter assignments that involve crafting essays and other scholarly works on themes relating to money and its exchange. In these situations, the intervention of an essay writing service is invaluable. These services are consistently dedicated to providing support, emphasizing a 'helping hand in academic struggle'.

Read More »Feminism Redux – Grande bites back at Bette

So, in an interview Bette Midler said that she was disappointment with the risque way young Hollywood tartlets were parading their bodies around. She specifically targeted little elf Ariana Grande when she said, and I quote, It’s always surprising to see someone like Ariana Grande with that silly high voice, a very wholesome voice, slithering around on a couch looking so ridiculous. I mean, it’s silly beyond belief and I don’t know who’s telling her to do it. I wish they’d stop. I wish they’d stop. But it’s not my business, I’m not her mother. Or her manager. Maybe they tell them that’s what you’ve got to do. Sex sells. Sex has always sold…

Read More »A Biracial Journey to Understanding Identity

I can remember at about the age of eleven being at one of the many Arabic parties I would attend growing up. The women were all laughing and dancing, their head scarves off, no men in the room. They wore beautiful dresses and their hair and makeup were fully done. They looked so elegant as I watched them belly dance effortlessly. I remember wishing my hair was as dark and as thick as theirs. I also wished my skin was as tan and my eyebrows as pretty. Why did I always have to feel like the white girl at the Arabic party? My cousins, aunts, and grandmother often introduced me as such: “ This is Amira, Mazen’s daughter. She’s American.” I have always wanted to write an article on this topic. I know that there are services that can write my essay. It's pretty interesting.

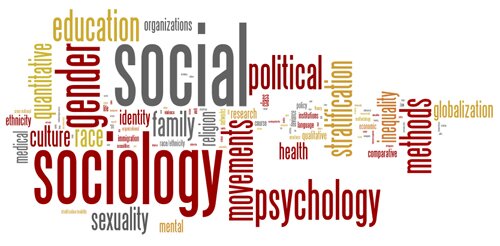

Read More » The Socjourn A New Media Journal of Sociology and Society

The Socjourn A New Media Journal of Sociology and Society